|

10 | 10 | " <td align=\"center\"><a target=\"_blank\" href=\"http://introtodeeplearning.com\">\n", |

11 | 11 | " <img src=\"https://i.ibb.co/Jr88sn2/mit.png\" style=\"padding-bottom:5px;\" />\n", |

12 | 12 | " Visit MIT Deep Learning</a></td>\n", |

13 | | - " <td align=\"center\"><a target=\"_blank\" href=\"https://colab.research.google.com/github/aamini/introtodeeplearning/blob/2025/lab1/solutions/TF_Part1_Intro_Solution.ipynb\">\n", |

| 13 | + " <td align=\"center\"><a target=\"_blank\" href=\"https://colab.research.google.com/github/aamini/introtodeeplearning/blob/master/lab1/solutions/TF_Part1_Intro_Solution.ipynb\">\n", |

14 | 14 | " <img src=\"https://i.ibb.co/2P3SLwK/colab.png\" style=\"padding-bottom:5px;\" />Run in Google Colab</a></td>\n", |

15 | | - " <td align=\"center\"><a target=\"_blank\" href=\"https://github.com/aamini/introtodeeplearning/blob/2025/lab1/solutions/TF_Part1_Intro_Solution.ipynb\">\n", |

| 15 | + " <td align=\"center\"><a target=\"_blank\" href=\"https://github.com/aamini/introtodeeplearning/blob/master/lab1/solutions/TF_Part1_Intro_Solution.ipynb\">\n", |

16 | 16 | " <img src=\"https://i.ibb.co/xfJbPmL/github.png\" height=\"70px\" style=\"padding-bottom:5px;\" />View Source on GitHub</a></td>\n", |

17 | 17 | "</table>\n", |

18 | 18 | "\n", |

|

210 | 210 | "\n", |

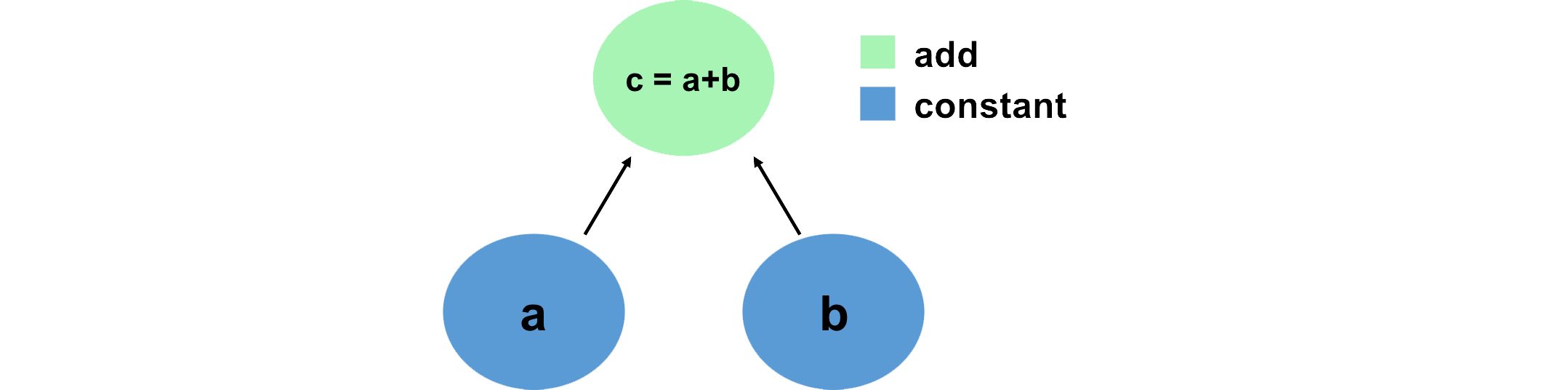

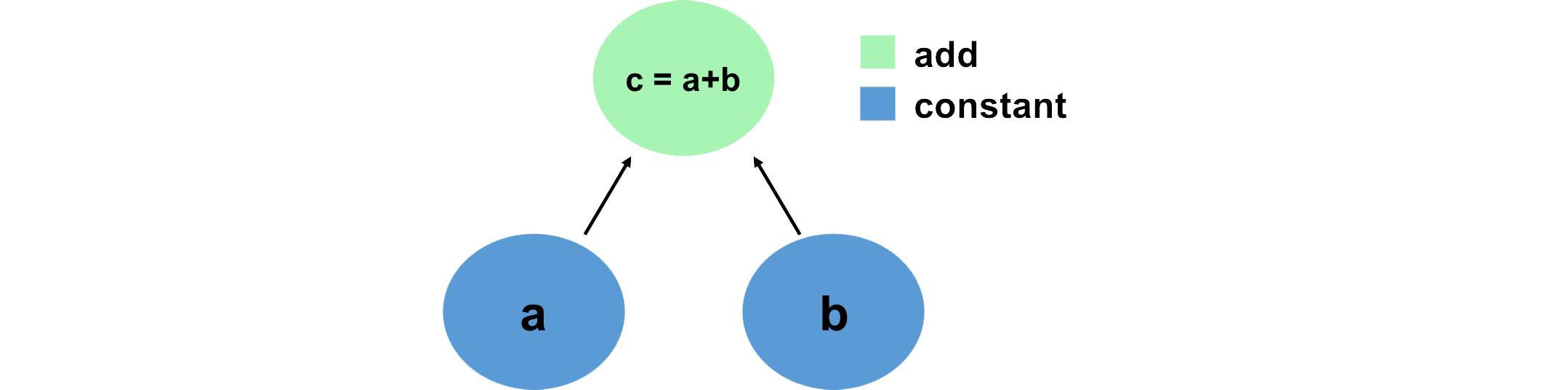

211 | 211 | "A convenient way to think about and visualize computations in TensorFlow is in terms of graphs. We can define this graph in terms of Tensors, which hold data, and the mathematical operations that act on these Tensors in some order. Let's look at a simple example, and define this computation using TensorFlow:\n", |

212 | 212 | "\n", |

213 | | - "" |

| 213 | + "" |

214 | 214 | ] |

215 | 215 | }, |

216 | 216 | { |

|

242 | 242 | "\n", |

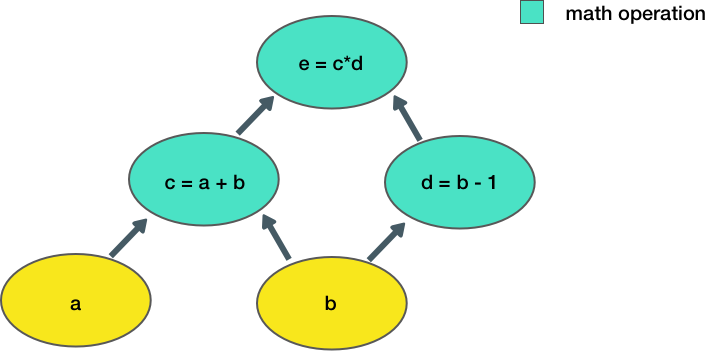

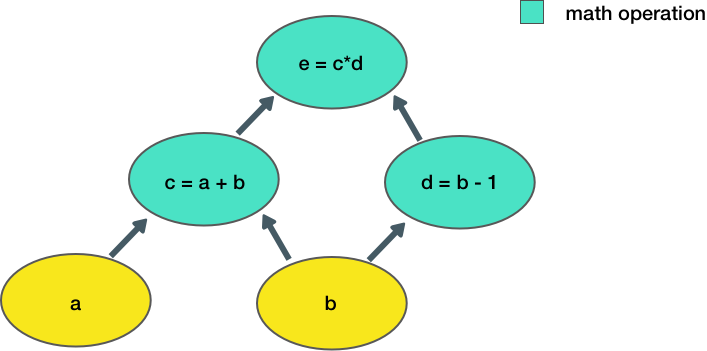

243 | 243 | "Now let's consider a slightly more complicated example:\n", |

244 | 244 | "\n", |

245 | | - "\n", |

| 245 | + "\n", |

246 | 246 | "\n", |

247 | 247 | "Here, we take two inputs, `a, b`, and compute an output `e`. Each node in the graph represents an operation that takes some input, does some computation, and passes its output to another node.\n", |

248 | 248 | "\n", |

|

316 | 316 | "\n", |

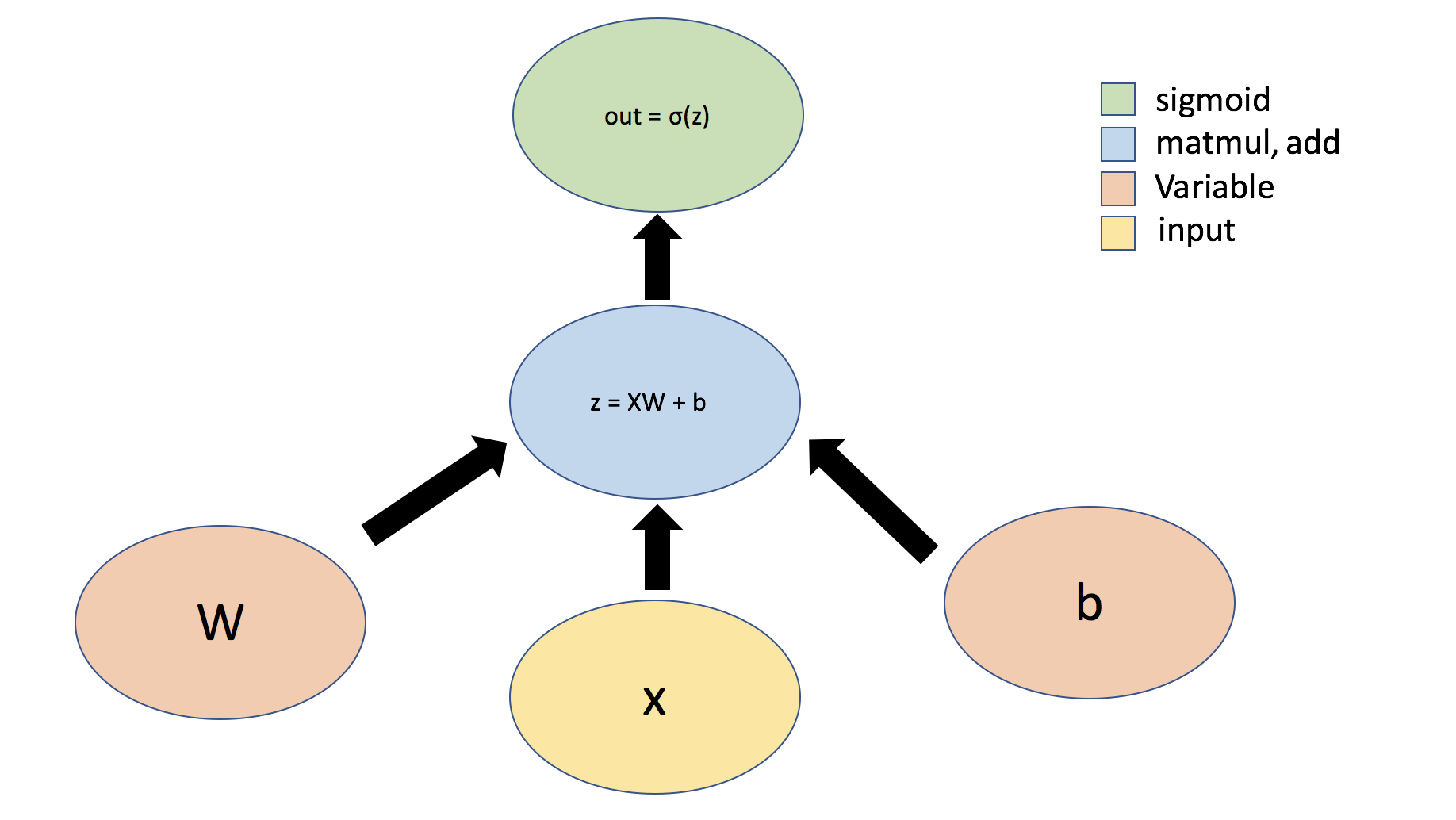

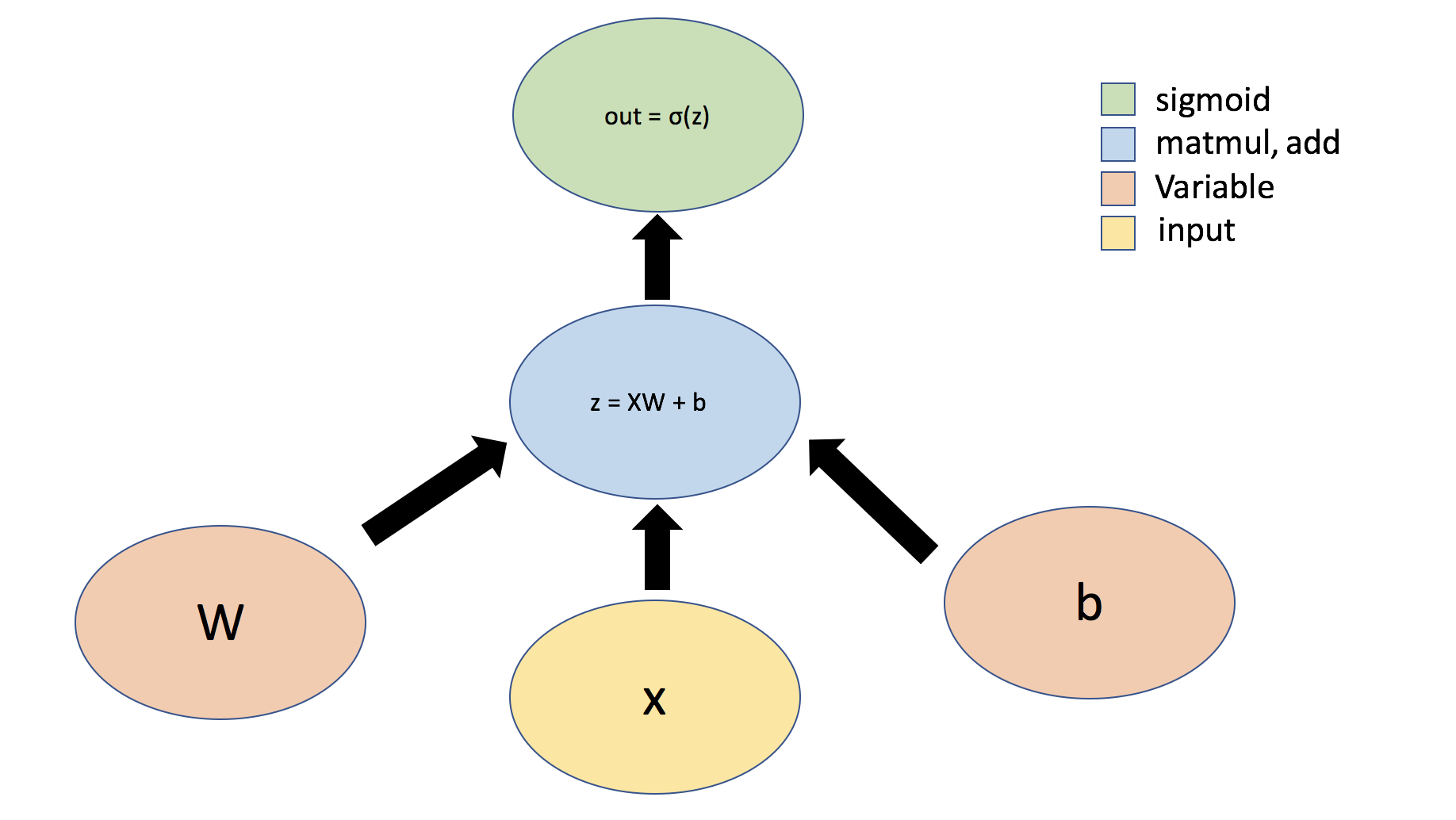

317 | 317 | "Let's first consider the example of a simple perceptron defined by just one dense layer: $ y = \\sigma(Wx + b)$, where $W$ represents a matrix of weights, $b$ is a bias, $x$ is the input, $\\sigma$ is the sigmoid activation function, and $y$ is the output. We can also visualize this operation using a graph:\n", |

318 | 318 | "\n", |

319 | | - "\n", |

| 319 | + "\n", |

320 | 320 | "\n", |

321 | 321 | "Tensors can flow through abstract types called [```Layers```](https://www.tensorflow.org/api_docs/python/tf/keras/layers/Layer) -- the building blocks of neural networks. ```Layers``` implement common neural networks operations, and are used to update weights, compute losses, and define inter-layer connectivity. We will first define a ```Layer``` to implement the simple perceptron defined above." |

322 | 322 | ] |

|

0 commit comments